Below is an exchange I had with a student following a workshop about research practices. The issue, I think, is at the heart of many of the problems we have in contemporary science. In biology, there is this rush to generate data to “prove” pre-conceived models. Sometimes, the tail starts wagging the dog. The whole point of why we’re doing science — to generate new knowledge that enables us to make robust predictions —gets lost. A laboratory leader (PI or Principal Investigator in academic parlance) would dangle the big shiny carrot of a high profile publication in front of a student or a postdoc, and then simply expect them to rush to the bench and deliver whatever data would convince Editors and reviewers. The whole process of science as an effort to falsify a given hypothesis — no matter how enamored the scientist is with that hypothesis — is turned upside down. It’s a corruption of the scientific method plain and simple. And it’s happening all the time in laboratories near yours.

It’s no wonder that we have this #ScienceCrisis. The whole academic culture is fast spinning into this negative spiral where the volume, not so much the quality, of the science outputs is what matters. The pressure on students and postdocs to deliver the “right” data can be unbearable. More often than not, they are also in a precarious personal and professional position, depending on the PI for their career advancement, validation of their visa or immigration status, or even to simply remain employed as a scientist. There are even reports that some PIs specifically target vulnerable foreign scientists so they can pressure and bully them.

A surprising number of students and postdocs I talked to tell me that they have never had any formal training in how to do science, on the cycles of deductive, evidence based experimental research. How to develop a hypothesis. How to draw conclusions from your data. The importance of robust and well controlled experiments. How to challenge your findings. The concept of orthogonal replication. Keplerian thinking — arguably what distinguishes the scientists who produce long-lasting discoveries — seems to be an alien concept for the great majority of biologists. The view that data should be sacrosanct, rather than a convenient route to publication, seems fuzzy in many minds.

To illustrate my point, here is that exchange I had with the student. This was their original question, “How do you deal with negative results in daily life and in the context of publications?”

I replied, “I will answer you, but first can you please tell me what you consider as a negative result?”

The student then wrote, “That would be results that contradict or are inconsistent with the hypothesis.”

This answer, sadly, didn’t surprise me and turned out to be exactly what I was guessing about the student’s mindset that led to the question.

This is verbatim what I ended up writing to the student.

“If you have a solid robust result that contradicts your hypothesis then it’s good news because you can draw a solid conclusion — your hypothesis is wrong and you need a new hypothesis. I don’t have a problem with this type of “negative” results because it is one step forward in discovering what the real mechanism/model is. It’s one step closer to the reality we try to uncover as scientists.

To me an experiment has failed when it is inconclusive. That’s when I get disappointed. This can be because of technical issues but more often than not the experiment wasn’t correctly designed and lacks proper controls etc., which means that you cannot really draw anything from the experiment (example: assay without positive and negative controls). There are also the cases where the controls don’t work as expected (example: positive signal with negative control). This indicates the method is not robust and you are probably better off findings other methods to test your hypothesis.

Also, once an experiment is consistent with your hypothesis, the best researchers still try to find other methods/approaches to challenge (falsify) the hypothesis. This is known as orthogonal replication where you challenge your hypothesis with multiple independent methods. This is the best way of doing science and allows you to proceed on solid footing. Papers with orthogonal replication are the best and most likely to stand the test of time. The worst papers are the ones that jump rom one shaky result to another with little orthogonal replications. Those papers are less likely to be reproducible and more likely to drift away into oblivion as times passes. They are also expensive papers because others will try to reproduce them and fail. The cost of bad science on the community is very high. It’s your responsibility to produce solid and reproducible data.”

My message is that we need to appreciate the nuances between a failed, inconclusive and a conclusive experiment. A failed experiment is one that was poorly executed, poorly controlled or simply failed on technical grounds. If an experiment is well-controlled and conducted to the best of current knowledge, techniques, and research abilities, then it’s not strictly speaking failed. It may be inconclusive because we cannot draw any solid findings from the data, but otherwise the “conclusion” could very well be that the result doesn’t confirm the original hypothesis. That’s still a conclusion. We just have to get back to the drawing board and think up new hypotheses based on the newly gained knowledge.

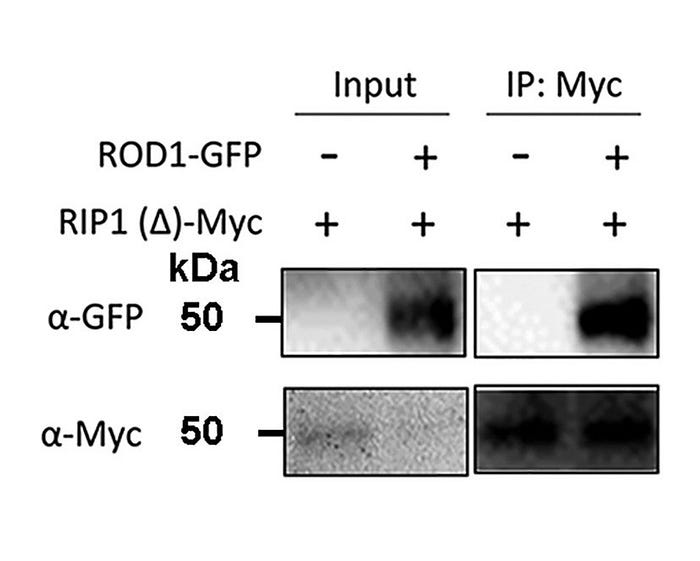

Sadly, I frequently see this approach to experimental biology. Say a student is tasked to show that protein A interacts with protein B. (That in itself is wrong as they should be tasked to ask the question of whether A binds B, not to confirm a pre-conceived model). They would perform a badly controlled experiment, say co-immunoprecipitation or bimolecular fluorescence complementation (BiFC) — methods that are are technically sensitive and prone to false positives. And I mean a really badly controlled experiments without the proper negative controls, for example. They would get the result they wanted (or that their boss wanted), and they move on. Everyone is happy. They might very well get it published past reviewers even in leading journals (see the Figure below for a spectacular example).

This is what I’m a calling a useless and inconclusive experiment. If the experiment was correctly controlled and did not yield evidence that protein A interacts with B, then I wouldn’t call it a failed experiment and certainly not an inconclusive experiment. The conclusion would be that under the selected experimental conditions, A doesn’t interact with B. Perhaps that’s not what the scientists were wishing for, but that a conclusion nonetheless. Science isn’t built on wishful thinking.

What to do? Of course, we’re all under pressure to deliver and publish our work. But don’t adopt a cynical attitude. Be a scientist in the true meaning of the term and be proud of that. Strive to do the best science you can under your own circumstances. Reputations do matter. Early in my career, I read the wonderful booklet “On Being a Scientist”, first published by National Academy Press in 1989. This is a must read in my opinion because the book states in simple terms the standards we should adopt to deal with the ethical dilemmas we face as scientists. One quote deeply inspired me and served as my moral compass for all these years, “Some people succeed in science despite their reputations. Many more succeed at least in part because of their reputations.”

Incidentally, the student who asked that question wasn’t your average student — they had outstanding credentials and were deservedly recruited to a highly selective elite PhD program. Perhaps the lesson to take from all this is that in this age of complex technical training, we are not placing enough emphasis of teaching how to do science. We need more formal training on the practice and theory of science. With Stephen Bornemann, our Director of Postgraduate Research and Training at The Sainsbury Laboratory, we are planning just that. Stay tuned.

Suggested readings

On Being a Scientist. Responsible Conduct in Research. National Academy Press.

Seager, T. Science does not ask “Why?”. Medium.